Portfolio · Cooling

Cooling that scales with the load.

Five years ago, "cooling" mostly meant perimeter CRAC units and a healthy aisle-containment plan. Then GPUs showed up, density doubled, and the rules changed. We carry cooling solutions across the whole density curve — from quiet wall splits in a closet to liquid-fed rear doors handling 80 kW per rack — and we'll help you size to where you'll be in three years, not just where you are today.

Why cooling now

Heat density is the question. Everything else is the answer.

Cooling decisions get made at the rack. The right approach for a 4 kW/rack server room and a 60 kW/rack AI cluster aren't the same product family — they aren't even the same conversation. Here's the rough density map of what we typically deploy.

1–5 kW per rack

Traditional server rooms and edge IT. Perimeter cooling with good airflow management is usually sufficient. Wall splits handle smaller rooms and IDF closets cleanly.

5–15 kW per rack

Modern enterprise IT. Mix of in-row supplemental and perimeter, hot-aisle/cold-aisle containment, sensor-driven adjustment. The bracket where most organizations live.

15–30 kW per rack

AI inference, GPU clusters, dense compute. In-row falls behind. Rear-door heat exchangers (passive or fan-assisted) become the right answer for retrofit and new builds alike.

30+ kW per rack

AI training and HPC. Air alone can't carry the load. CDU-fed rear-door exchangers, direct-to-chip cold plates, full liquid loops. New territory for most facilities — we'll help you plan it.

Manufacturer · Light through liquid

VertivVertiv. Cooling across the full density curve.

Vertiv's cooling line covers the full density curve — wall splits for closets, perimeter CRAC and CRAH units for traditional data center floors, in-row supplemental cooling, rear-door heat exchangers for high-density retrofit, and CDU systems for liquid cooling at the AI tier. Same vendor across the curve means tighter integration, fewer service relationships, and one set of monitoring tools instead of three.

KAT-5 is a Vertiv channel partner with installation capability across the cooling portfolio.

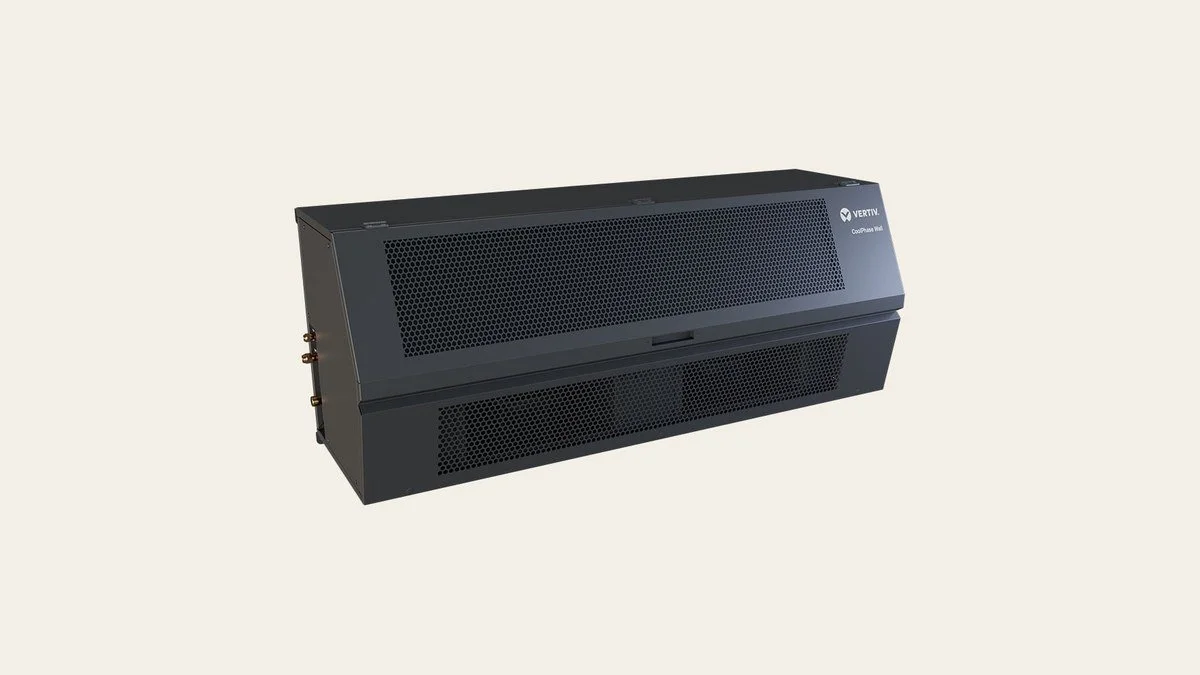

Vertiv CoolPhase

Wall-mounted split system · IDF closets & small server rooms

Wall-mounted, ductless split A/C system designed for IT spaces — IDF closets, small server rooms, edge sites where a roof unit and a perimeter CRAC are both overkill. Dedicated outdoor condenser, indoor evaporator, year-round operation including freezing-temperature heat-mode for cold-climate edge sites.

Where it fits: any IT space that's currently cooled by a comfort A/C (or worse, a window unit) and needs a real, building-grade solution that won't quit in February.

Vertiv Liebert Perimeter CRAC & CRAH

Perimeter cooling · DX (CRAC) and chilled water (CRAH)

The traditional data center cooling backbone. Vertiv's Liebert perimeter units cover both DX (computer room air conditioning, self-contained refrigerant) and CRAH (chilled water, fed from a building or rooftop chiller plant). Variable-speed fans, modern controls, integration with site-level EcoStruxure equivalents.

Where it fits: data center floors with established airflow plans, raised-floor distribution, or under-rack air supply. The known answer at the room level — and still the right one for a lot of buildings.

Vertiv CoolChip CDU

Cooling Distribution Unit · feeds liquid cooling at the rack or row

The CDU is the heart of liquid cooling — a heat-exchanger that isolates the facility chilled-water loop from the precision liquid loop that actually touches IT hardware (rear doors, cold plates, immersion). It's the component that makes liquid cooling deployable in buildings that were never designed for it.

Where it fits: any project where in-row air can no longer keep up — typically 25 kW/rack and above. AI training clusters, dense GPU compute, HPC. Pairs with rear-door heat exchangers or direct-to-chip cold plates downstream.

Vertiv Rear Door Heat Exchanger

Rack-level liquid cooling · passive or fan-assisted

A rear-door heat exchanger replaces the rear door of a standard rack with a finned coil and (optionally) fans, fed by chilled water from a CDU. The hot exhaust air from servers passes through the coil before it ever reaches the room — so the room itself stays at intake temperature, and the per-rack cooling capacity goes up dramatically.

Where it fits: dense retrofit (deploy in an existing data center without re-architecting the cooling plant) and new high-density builds where you want close-coupled cooling without the cost or complexity of full direct-to-chip. Handles 60–80 kW/rack depending on configuration.

Manufacturer · In-row cooling

APC by Schneider ElectricAPC. In-row, integrated.

Since acquiring Uniflair, Schneider has narrowed the APC cooling portfolio to a single focus: row-level cooling, integrated tightly with APC racks, containment, and the EcoStruxure monitoring stack. For projects that are already standardized on APC for power and rack — the natural fit is to keep the cooling in the same family.

KAT-5 is an authorized APC reseller and installation partner.

APC Uniflair InRow DX

In-row direct expansion cooling · self-contained refrigerant

The InRow DX is APC's direct expansion in-row cooling unit — a refrigerant-based system that sits inside a row of racks and pulls hot air directly from the hot aisle, conditions it, and supplies cool air back to the cold aisle. Self-contained refrigerant loop means no facility chilled water plumbing required.

Where it fits: small-to-mid data center rooms (typically up to ~15 kW/rack), facilities without an existing chilled water infrastructure, retrofit projects where adding a chilled water loop isn't feasible. The "drop in and turn it on" option for in-row cooling.

APC Uniflair InRow Chilled Water

In-row chilled water cooling · facility CHW loop required

Same in-row architecture as the DX, but fed by facility chilled water rather than a self-contained refrigerant loop. Higher capacity per unit, better efficiency at scale, and the right answer when the building already has chilled water infrastructure (which most modern data centers do).

Where it fits: data centers with existing chilled water plants, larger room deployments where the higher capacity per unit reduces footprint, and facilities standardizing on a single coolant medium across the room. Handles higher density per row than the DX.

What KAT-5 adds

Cooling is a building problem.

The hardware sits in your room, but it depends on the rest of the building — the chiller plant, the airflow, the containment, the controls, the rooftop. Specifying the right cooling system means understanding all of it, not just the spec sheet. We've walked the rooms, modeled the loads, called the AHJ, supervised the install, and stayed for the post-install commissioning. The product is the easy part. We're the part that makes it actually work.

Let's talk

Tell us what you're cooling.

Send us the load list, the floor plan, or just the question. We'll come back with a recommendation, a budgetary number, and an honest read on whether the gear you're already considering is the right fit. No pressure to commit until we both agree it makes sense.